We Do Diligence: Making The MSSP Forrester Wave™ Evaluations

Security and risk pros rely on Forrester Wave™ evaluations to guide them through their purchase journey, help them understand what is and isn’t important, and help them avoid the pitfalls when identifying strategic partners. This time, our analyses focused on two vendor categories: global and midsize managed security service providers (MSSPs), a market almost 25 years old now. While tried-and-true services such as managed intrusion detection/prevention systems and managed firewall are now on the downswing, newer services such as managed detection and response, security orchestration automation and response, and managed security analytics are growing rapidly in their place. With this much change occurring in an industry all at once, we knew we’d have our hands full as we began the Wave process.

Forrester prides itself on the transparency of the Wave methodology. We also want to offer a unique perspective on the information we process and the effort that goes into our evaluative research. At the beginning of Q3, our refreshes of the global and midsize MSSP Wave evaluations went live. We’d like to share some statistics on what the team evaluating vendors experienced behind the scenes. Our colleague Melissa Bongarzone deserves the credit for this blog — first for generating the idea that led to it and second for conducting the aggregation and analysis of all the data!

Questionnaire Word Counts

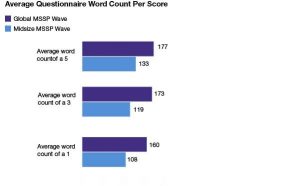

We cap our character limits in vendor questionnaire responses to even the playing field, reduce redundancies, and to cut through fluffy marketing language. At the same time, this helps detect the most important and differentiating information about each product or service.

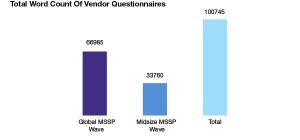

Unsurprisingly, a link exists between scores and word count. Vendors that reached the character count scored higher. We ask vendors to describe incredibly complex product and service capabilities in a limited number of words, and comprehensive answers take real estate. Those that scored 1s could not answer questions as thoroughly as their higher-scoring counterparts or tried to “tailor” answers to what they believed we were looking for. Between the two questionnaires submitted for the global and midsize Waves, we read over 100,000 words in total. To put this into perspective, the first two Harry Potter novels contain just over 76,000 and 85,000 words, respectively, and Harper Lee’s classic To Kill a Mockingbird clocks in at 100,388.

Hours Spent

In total, we spent 77 hours in externally facing parts of the Wave. Ninety-five hours went toward internal review. Here’s the breakdown of the activity:

It took 77 hours for vendor-facing activities between the two Waves, which include activities such as executive strategy review and demos, customer reference calls, and fact checks. Approximately 95 hours went toward scoring, reviewing customer reference surveys, reviewing fact-check materials, and content editing. These figures exempt activities like internal communication, vendor communication, and collaboration with other analysts. It’s an appropriately intense process in terms of time, attention, and activity.

Emails Received

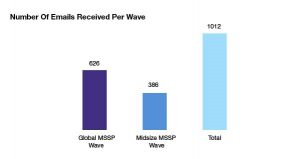

In total, our research associate received 1,012 emails from our Wave participants for these two Waves: 626 in the global Wave and 386 from the midsize.

Participants in a Forrester Wave have questions — lots of them — and email tends to be the dominant communication form of this exchange. One important part of the Wave process is that all included vendors receive the same time and attention. We make every effort we can to mitigate confusion on deliverables, deadlines, wording of questions, and demos. As the gatekeeper of these interactions, the research associate is subject to a comprehensive test of organization and effective communication on a daily basis. We work to make sure that participating vendors compete in an equal, transparent, fair process in which they can demonstrate the success — or failure — of their products and services.

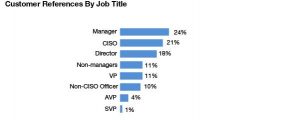

Customer References

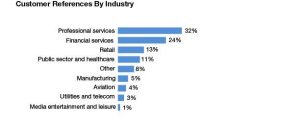

An integral part of our research is validating vendor information by weighing in on the experience of their customers. These surveys and interviews give us insight on efficacy of the product, the client’s experience, and the benefits and challenges of working with the vendor. Our surveys reach employees up and down the organization’s hierarchy, from hands-on practitioners who work directly with the product (such as analysts or engineers) to upper management, such as CIOs and CISOs, who were part of the decision-making process when selecting the vendor.

We Do This — And We Love It — So You Don’t Have To

This peek behind the curtain should provide some evidence of how seriously we take our analysis and the amount of effort that goes into it so that our end user clients can make the best, most accurate decisions possible with maximum information. Spending hundreds of hours reviewing answers, participating in demonstrations, and analyzing vendors is tough, especially when teams are already underwater with other responsibilities. That’s why we keep the end user security and risk pros that are our clients as our focus in all our research.

(written with Melissa Bongarzone, research associate at Forrester)