Safety Shouldn’t Mean Censorship: Maintain Section 230 And Incentivize Moderation

If a party guest made a racist comment at the dinner table, would you blame the host? What if the host remained silent and did nothing to stop the guest? How about if the host gave that guest a microphone? These are the types of questions that the Supreme Court is tackling via two cases challenging Section 230, which states that:

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

Section 230 protects platforms and services from being held liable for the content posted by users. This means that the “party guest” is responsible for their actions, but the “host” is not. These Supreme Court cases, which suggest that Google and Twitter should be held responsible for terrorist-related content that they recommended or hosted, reveal two key problems. Social media platforms:

- Don’t consistently enforce content moderation policies. Most major social media platforms have robust content moderation policies to prevent users from posting content such as hate speech and misinformation. Despite the policies, some of this content still slips through the cracks, as documented in Forrester’s report, Funding Truth In The Misinformation Age. Forrester data from November 2022 found that almost a third of US online adults who stopped or plan to stop using Twitter say it’s because they found the content to be too hateful, and 21% don’t like the amount of disinformation being spread.

- Recommend harmful content to users. Social media algorithms act like microphones that recommend relevant content to each user. This amplification becomes problematic when the “relevant content” is dangerous. A 14-year-old girl in the UK committed suicide after continuously getting served videos and images on Instagram about self-harm and suicide. When harmful content remains intact on the platforms and algorithms help spread that content, the combination is dangerous.

The Collective Online Experience Would Degrade

The media industry can’t ignore these two fundamental problems on the platform side, but eliminating Section 230 isn’t the answer. The internet experience that we’ve come to know would fall apart, promoting heavy-handed government intervention and content censorship. A flood of lawsuits could force platforms to dismantle their algorithms and overreach on content removal. Repealing this law would:

- Make content less relevant. While the algorithms sometimes promote harmful content, they also serve users harmless, entertaining, and helpful content that adds value to their experience. Without a recommendation engine, the content becomes less personalized, rendering the platforms less valuable.

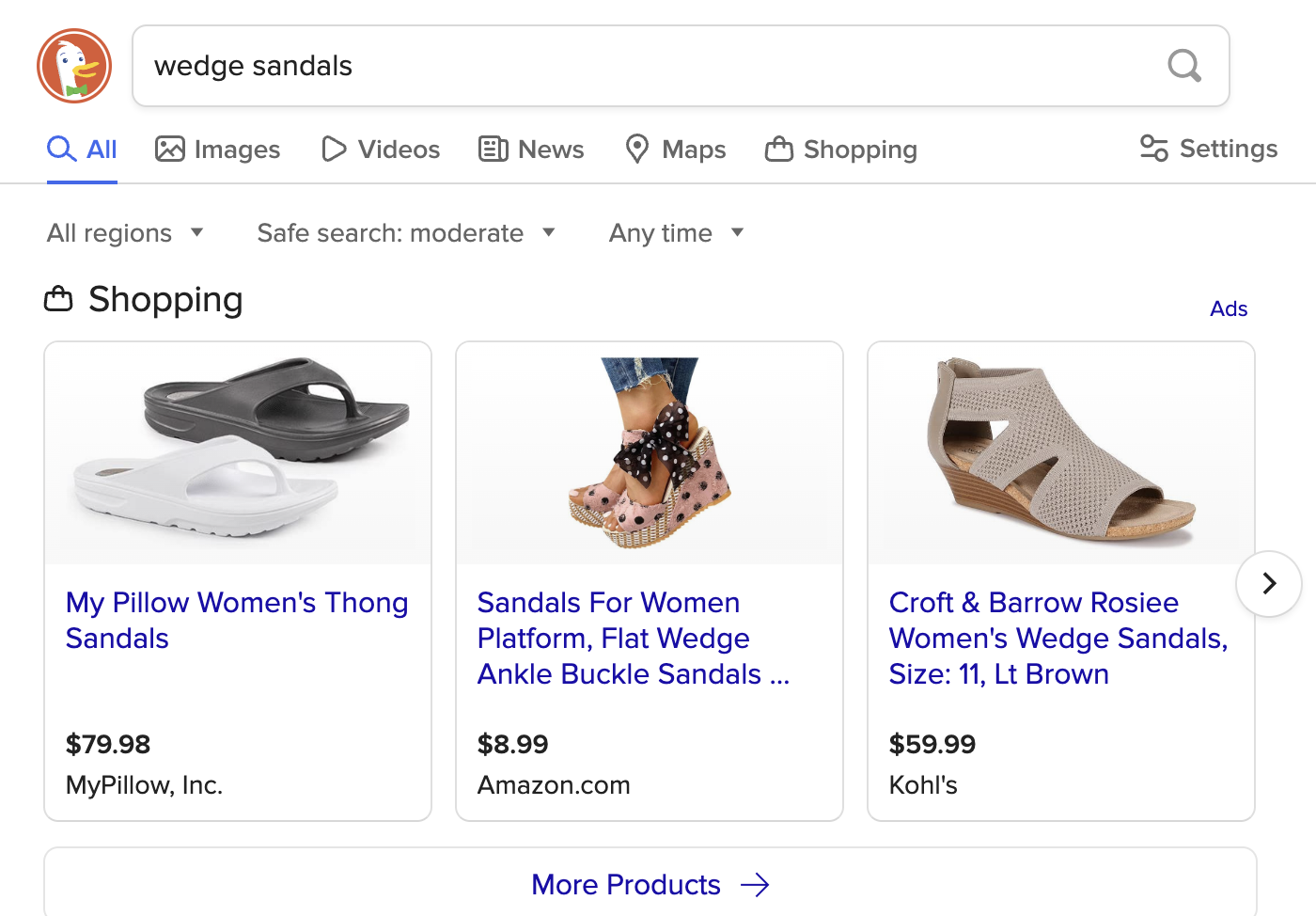

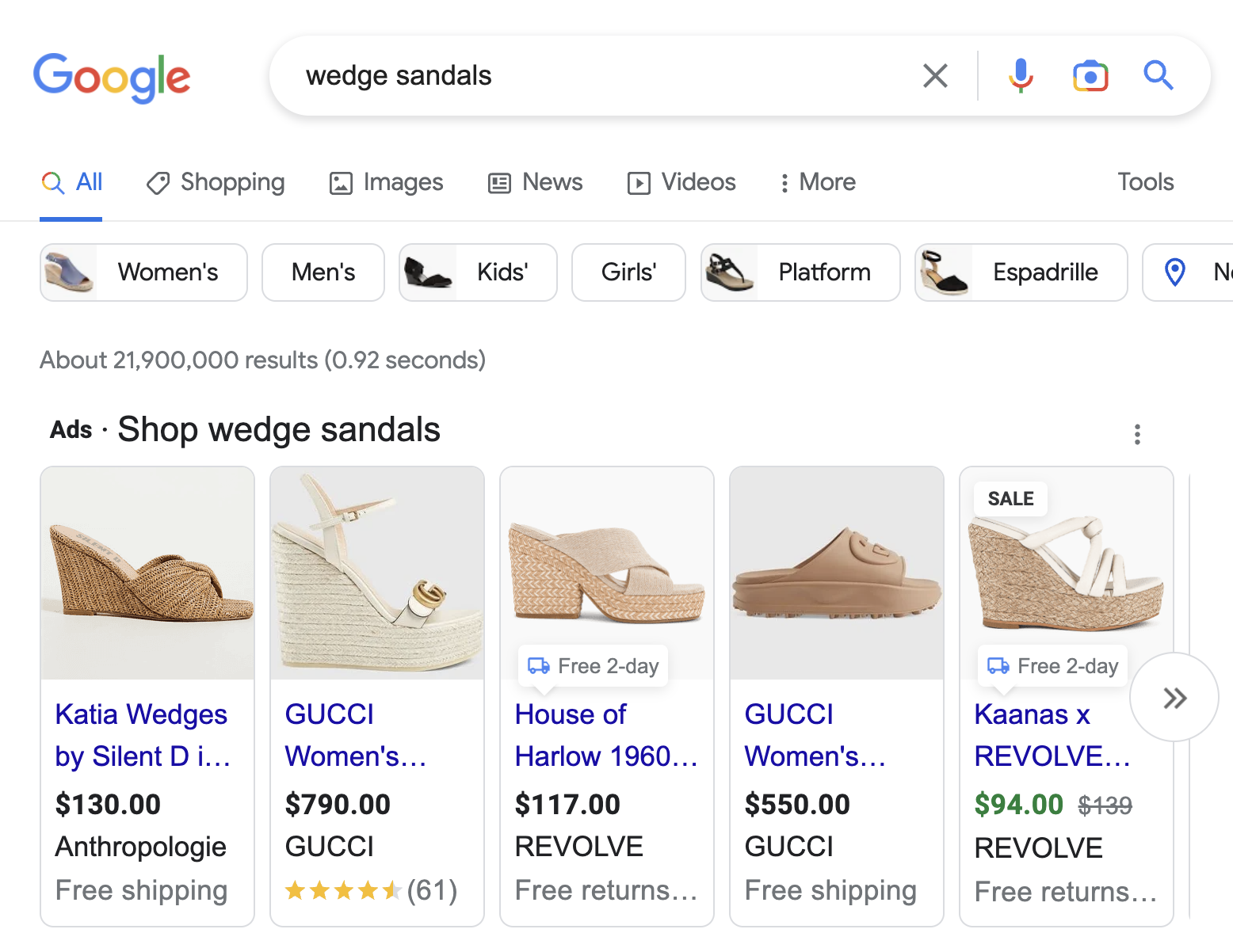

- Hurt the ad experience. Personalized targeting also makes ads more effective. DuckDuckGo is a privacy-first search engine that serves ads only based on the keywords that you search, with no other personalization. The search ad results are significantly different from Google (see Figure 1). Google serves me brands, styles, and stores that I know and love. It knows my preferences, which makes the ads more relevant and click-worthy.

- Decimate platforms’ business. Advertising makes up the majority of social media platforms’ revenue. If the ads are less relevant, users won’t engage, and brands aren’t going to see the same business results. Advertisers will move their dollars into other, more impactful media channels.

Figure 1: Search Ad Results More Relevant On Google Than DuckDuckGo

Policy Enforcement Solves The Core Problem

Google doesn’t want terrorist content on YouTube, and Meta doesn’t want to spread disinformation. This much is clear from their community guidelines. The core issue isn’t that recommendation algorithms spread content — it’s that harmful content remains in the system. Platforms must start enforcing their own policies. Marketers must give them an incentive. Use the leverage you have (ad dollars) to push your media partners to clean up the experience.

Forrester clients, to chat more about this topic, you can schedule a guidance session.