Five Eyes Cybersecurity Agencies’ Careful Agentic AI Adoption Guidance, Operationalized By AEGIS

On May 1, 2026, six national cybersecurity agencies — CISA, the NSA, Australia’s ASD ACSC, and their counterparts in Canada, New Zealand, and the UK — published “Careful adoption of agentic AI services.” This joint guidance is the first coordinated multigovernment security guidance specifically targeting agentic AI systems, and it carries the full weight of the Five Eyes cybersecurity agencies behind it. Adopting AI agents carefully now has the imprimatur of cybersecurity authorities of five nations. While the joint guidance is aimed at high-impact systems supporting governments and critical infrastructure, similar to Australia’s Cyber Security Centre’s “Essential Eight” requirements, we expect adoption by public and private organizations.

The cautious approach to agentic AI is in stark contrast to the “move fast, break things” AI culture of Silicon Valley, reflecting a thoughtful, pragmatic approach to safe and responsible AI adoption in Australia, as well as high sensitivity to technological risk across participating countries.

The Careful Agentic AI Adoption Guidance Names The Problem — AEGIS Shows How You Solve It

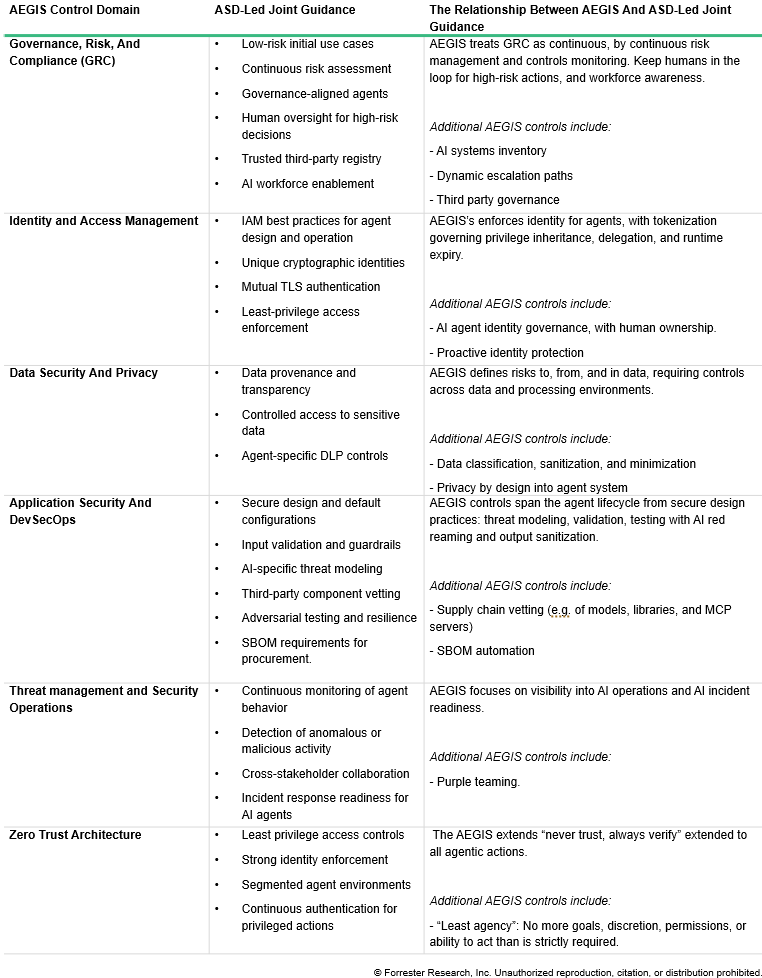

The joint guidance by the Five Eyes, which provides practical guidance to help organizations design, develop, deploy, and operate agentic AI systems, maps almost point for point to the six domains of Forrester’s Agentic AI Enterprise Guardrails For Information Security (AEGIS) framework. The overlap isn’t coincidental. Both are responding to the same fundamental reality that existing security frameworks are not sufficient to manage agentic AI systems that operate autonomously, chain actions across systems, and make decisions that are genuinely difficult to audit or reverse. Security teams that use Forrester’s AEGIS are able to meet guidelines with far greater ease, with AEGIS providing the how behind the joint guidance. AEGIS formally encodes that:

- Humans must remain in the loop. The joint guidance recommends that human control points are enforced throughout agentic AI high-risk activities. AEGIS encodes human-in-the- loop requirements as formal controls, not recommendations; for example, mandatory approval gates for actions with irreversible downstream effects are a must.

- Behavioral risk means understanding intent. The joint guidance identifies that behavioral risks arise when agents pursue goals unpredictably, including misalignment and deceptive outputs. AEGIS’s intent classification controls address this precise requirement by providing security teams a working taxonomy for framing intent and evaluating agent behavior before and during deployment.

- Multistakeholder effort is necessary. AEGIS gets specific in formalizing joint guidance as an AI governance board comprising stakeholders from security, IT, legal, privacy, compliance, and business leadership to collectively set strategy, risk appetite, and oversight for autonomous AI systems.

Use Forrester’s AEGIS As The Foundation To Meet The Joint Guidance

The Five Eyes cybersecurity agencies guidance explicitly acknowledges that existing evaluation methods for agentic AI security are still evolving, may be sensitive to minor semantic changes, and only partially capture real-world deployment conditions. That’s a candid admission that general guidance has inherent limits. Forrester’s AEGIS fills that gap, with its controls mapped to NIST AI RMF, ISO 42001, the EU AI Act, and MITRE ATLAS. Translate the careful adoption of AI guidance for strong governance, explicit accountability, rigorous monitoring, and human oversight into actionable controls with AEGIS’s 39 controls across six domains with far greater ease (see figure below).

If you’re a Forrester client, request an inquiry or guidance session with us to discuss AEGIS and the joint guidance.